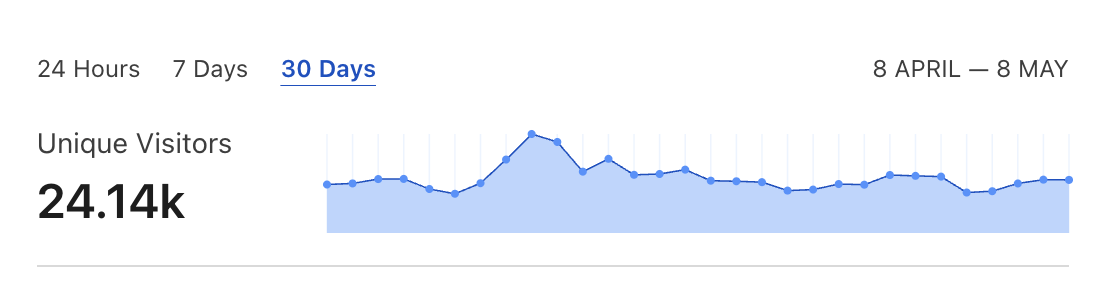

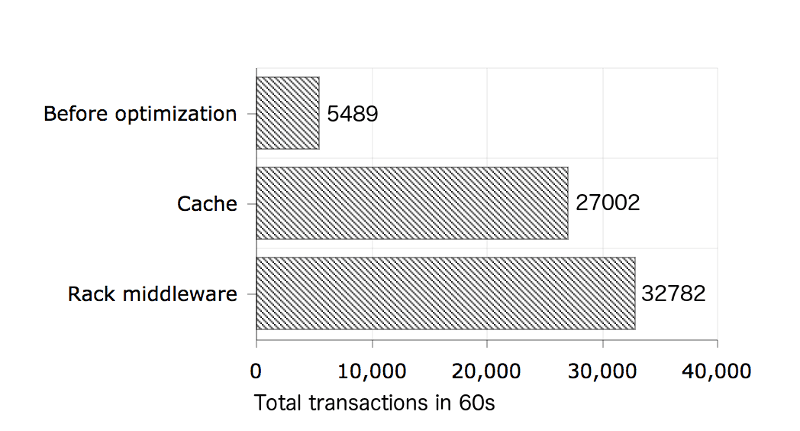

According to (a bit exaggerated) Pareto principle, 5% of your Rails app endpoints could account for 95% of performance issues. In this blog post I will describe how I improved a performance of my Rails application’s bottleneck endpoint by over 500% using a simple Redis caching technique and a custom Rack middleware.

Over 500% performance improvement

Over 500% performance improvement

Benchmarks were conducted using Siege on a 2015 Mac Book Pro with 16GB RAM and 2,2 GHz Intel Core i7. I’ve executed them against Rails app running locally in a production mode with a copy of a production database using Puma server with 2 workers, 16 threads each. I’ve used the following Siege settings:

siege --time=60s --concurrent=20

Read on if you’re interested in how the improvement was achieved.

Before Rails performance optimization

A detailed performance benchmark results before I started the whole optimization process:

Transactions: 5489 hits

Availability: 100.00 %

Elapsed time: 59.47 secs

Data transferred: 868.35 MB

Response time: 0.22 secs

Transaction rate: 92.30 trans/sec

Throughput: 14.60 MB/sec

Concurrency: 19.94

Successful transactions: 5489

Failed transactions: 0

Longest transaction: 0.63

Shortest transaction: 0.03I decided this endpoint would a good candidate for optimization because it was used by both landing page React frontend and all of the iOS mobile clients on startup. Also, data was the same regardless of which user requests it, so I would be able to cache one version and present it to everyone.

One caveat was that the endpoint accepts an optional param discounted_by. Because it is a continuous param type (all values from 0.0 to 100.0 are valid) it would be impossible to cache all the potential queries. If your query accepts only one param of discrete type (e.g. category), you could consider caching all the possible results.

I ended up caching results of the query performed without param and serve cached version to clients which did not provide discounted_by value in the request.

Add Redis cache for slow Active Record queries

Database level optimization techniques have its limits. Once your data set grows large and business logic obliges you to fetch data from a couple of joined tables it might be difficult to achieve desired performance in an SQL database without resorting to caching.

If you are using Sidekiq in your project then you have Redis database already there. It is much simpler to use an existing infrastructure than having to add yet another dependency (e.g. Memcached). Redis provides a straightforward API for key-value storage. You don’t need to add any special gems to use it as your cache.

If Heroku is your hosting provider, then Redis to Go is what you are probably using. Enabling direct access to Redis, in that case, is as simple as adding one file:

config/initializers/redis.rb

require 'redis'

$redis = Redis.new(url: ENV.fetch("REDISTOGO_URL"))I am using Sidekiq Cron to update my cache entry every half an hour:

app/jobs/cache_updater_job.rb

class CacheUpdaterJob

include Sidekiq::Worker

sidekiq_options queue: 'default'

def perform

products = Product.current_promotions(

Product::GOOD_DISCOUNT

).map do |product|

Product::Serializer.new(product).to_json

end

json_data = { products: products }.to_json

$redis.set(

Product::PROMOTIONS_CACHE_KEY,

json_data

)

end

endconfig/schedule.yml

update_promotions_cache:

cron: "*/30 * * * *"

class: "CacheUpdaterJob"Updating cache every 30 minutes works for my app’s case. Even if you need to serve your clients an almost live data, updating the cache every couple of seconds could still be more performant then fetching it from a database for every request.

Here’s how a Rails controller returning a cached response for the request without discounted_by param looks like:

class API::PromotionsController < API::BaseController

def index

if discounted_by = params[:discounted_by]

products = Product.current_promotions(discounted_by)

.map do |product|

Product::Serializer.new(product).to_json

end

render json: { products: products }

else

self.content_type = "application/json"

self.response_body = [

$redis.get(Product::PROMOTIONS_CACHE_KEY) || ""

]

end

end

endThis version is ~5 times faster than the base one:

Transactions: 27002 hits

Availability: 100.00 %

Elapsed time: 59.30 secs

Data transferred: 4271.65 MB

Response time: 0.04 secs

Transaction rate: 455.35 trans/sec

Throughput: 72.03 MB/sec

Concurrency: 19.96

Successful transactions: 27002

Failed transactions: 0

Longest transaction: 1.21

Shortest transaction: 0.00Not only it eliminates a need for a database query but also reduces memory usage because you don’t need to instantiate Active Record objects. Depending on your data size even JSON serialization itself could be a severe performance overhead.

You can also check out my other blog post for more tips on how to reduce memory usage in Rails apps.

Optimize Rails with Rack middleware

Each request has to pass through all of the following Rails middlewares before it hits your application’s code:

use Rack::Sendfile

use ActionDispatch::Static

use ActionDispatch::Executor

use ActiveSupport::Cache::Strategy::LocalCache::Middleware

use Rack::Runtime

use Rack::MethodOverride

use ActionDispatch::RequestId

use ActionDispatch::RemoteIp

use Rails::Rack::Logger

use ActionDispatch::ShowExceptions

use ActionDispatch::DebugExceptions

use ActionDispatch::Callbacks

use ActionDispatch::Cookies

use ActionDispatch::Session::CookieStore

use ActionDispatch::Flash

use Rack::Head

use Rack::ConditionalGet

use Rack::ETagYou can shave off a couple of milliseconds by bypassing the default stack and sending a response to client straight from your custom Rack middleware. You can do it by adding the following Rack app:

lib/cache_middleware.rb

class CacheMiddleware

def initialize(app)

@app = app

end

def call(env)

req = Rack::Request.new(env)

cache_path = req.path == "/api/promotions.json"

no_param = req.params["discounted_by"] == nil

if cache_path && no_param

[

200,

{"Content-Type" => "application/json"},

[$redis.get(Product::PROMOTIONS_CACHE_KEY) || ""]

]

else

@app.call(env)

end

end

endand configuring your Rails app to insert in at the beginning of its middleware stack:

config/environments/production.rb

config.middleware.insert_before 0, CacheMiddlewareIt checks if a request is supposed to hit your optimized endpoint and has no value for an optional param. If that is the case it returns the cached JSON to the client, if not it passes the request to the next middleware in the stack.

This method is a bit extreme. It makes sense to use it only if you are experiencing a very heavy load. It also disables most of the tools which Rails provide out of the box, like cookies, session management or logging. Anyway, depending on your use case those couple of milliseconds saved could translate into serious hosting costs reduction.

These are detailed results of benchmarks when using a custom middleware. It’s ~20% improvement compared to “cache only” version:

Transactions: 32782 hits

Availability: 100.00 %

Elapsed time: 59.94 secs

Data transferred: 5186.03 MB

Response time: 0.01 secs

Transaction rate: 546.91 trans/sec

Throughput: 86.52 MB/sec

Concurrency: 19.97

Successful transactions: 32782

Failed transactions: 0

Longest transaction: 0.16

Shortest transaction: 0.00Final remarks

To keep things simple I did not cover any of the more advanced techniques like automatic cache invalidation or using a built-in Rails cache support. Check out redis-rails gem and official guides if you are interested in that. Please remember that benchmarks were conducted on a local machine so they don’t take networking overhead into account. 500% gain is what I managed to achieve in application-specific code.