In this tutorial, I’ll describe a couple of non-obvious Ruby on Rails mistakes that could bring down your production system. They are so sneaky that they could get past the review process of even more experienced developers. Please don’t ask me how I know them.

Overusing ActiveRecord::Base.transaction

ActiveRecord makes it just to easy to wrap any arbitrary chunk of code in a database transaction. Have a look at the following example:

post = Post.find(params.fetch(:id))

Post.transaction do

post.update!(params.fetch(:post_params))

post.user.update!(params.fetch(:user_params))

endAt first glance, it might look correct. We want to update the post and its user but rollback the error to keep the state consistent.

The problem is that by wrapping the code execution in the transaction, we are granting a RowExclusiveLock on all the updated objects until the whole block of code finished running. Any thread trying to update the same post or user will have to idly wait for the previous transaction to finish.

Imagine that the post uses Carrierwave with S3 for media attachments. Updating an image_url attribute will open an HTTP connection, download the asset, and upload it back to AWS S3. This is all happening in a database transaction locking access to those objects for way too long.

If you are working in a Ruby multithreaded environment e.g., with Puma or Sidekiq, a broad database transaction scope is a recipe for introducing deadlocks. Deadlock is two more or database locks blocking each other and not able to continue execution. They usually start popping only with high enough load.

I’ve learned the hard way that code that worked seemingly correct for the last couple of months suddenly started locking. It was not possible to track a recent change to the codebase that introduced the problem.

Overusing ActiveRecord transactions must be avoided. Validations and integrity checks before the actual save should be used instead of relying on Rails to magically rollback the invalid state.

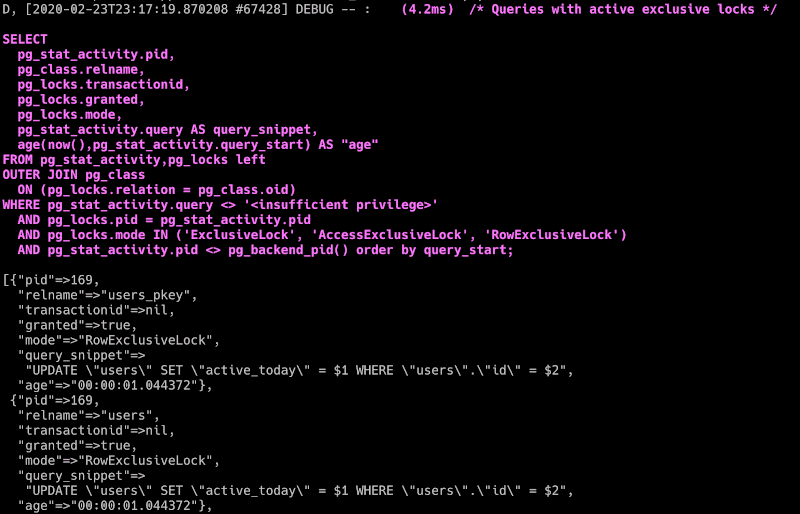

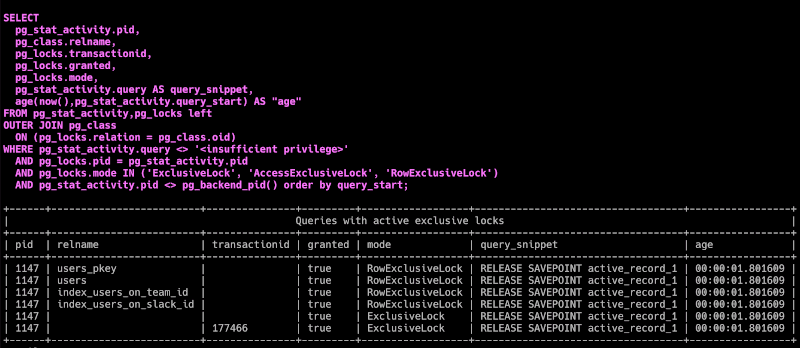

Detecting deadlocks in Rails apps

If your production is already down a or database is sluggish for no apparent reason, you might have deadlocks. Unless you perform long-running background queries, a healthy Rails app database should never lock for more than a couple of seconds at most.

To verify that you can use rails-pg-extras gem. It offers a simple API for displaying current exclusive locks:

RailsPgExtras.locks

You can write a simple monitoring script checking if locks are taking too long and should be looked into:

LOCKS_THRESHOLD_MS = 200

long_locks = RailsPgExtras.locks(in_format: :hash).select do |lock|

(ActiveSupport::Duration.parse((lock.fetch("age")).to_f * 1000) > LOCKS_THRESHOLD_MS

end

raise "Long running locks: #{long_locks}" if long_locks.present?RailsPgExtras under the hood executes lightweight SQL queries . You can safely run those checks even a dozen times per minute (e.g., in a recurring Sidekiq job) without negatively affecting your database.

Killing deadlocked database connection

You can cherry-pick a stuck transaction to cancel using its PID number by running the following SQL:

ActiveRecord::Base.connection.execute("SELECT pg_cancel_backend(#{pid})")In case it does not work, you can be a bit more aggressive by killing the connection:

ActiveRecord::Base.connection.execute("SELECT pg_terminate_backend(#{pid})")Alternatively, you can go for “turn it off and on again” approach by killing all the active database connections and restarting the Ruby processes.

RailsPgExtras.kill_allIf your production system is in a bad enough state than even initializing the Rails console process is not possible than executing this snippet of raw SQL might do the trick:

SELECT pg_terminate_backend(pid) FROM pg_stat_activity

WHERE pid <> pg_backend_pid()

AND query <> '<insufficient privilege>'

AND datname = current_database();Console sandbox mode

Rails console offers a handy --sandbox flag that executes the whole process inside a database transaction and rolls back all the changes when it terminates. It might seem like a perfect idea to test things out on production with this flag on, e.g., before running a complex migration or when testing a new feature with a production dataset. Imagine we want to test out your activity reporting system after marking all the users as recently active:

User.update_all(last_active_at: Time.current)

User::ActivityReport.call(from: 1.day.ago, to: 1.day.from_now)It might look harmless at first glance. Users are updated using a single SQL query that would not trigger any callbacks and should be fast to execute even on larger collections. “It will just rollback when I close the console.”

While you are playing around with the report, it’s unfortunately quite probable that your production is already down. Updating any ActiveRecord object in the sandbox mode effectively grants the console process connection a long-lasting RowExclusiveLock on the corresponding database row.

Any background worker or web server trying to update objects that you “harmlessly” modified in sandbox mode is suspended waiting for the Rails console to release its global lock. Things can get nasty quite fast with idle processes queueing up. In that case, even exiting the console process might be difficult because the transaction could have a hard time doing the correct rollback in a database overloaded with idle connections.

In that scenario, you sometimes must resort to manually killing the hanging connections and restarting the processes.

Unsafe database migrations

Database schema and data migrations are an often cause of Ruby on Rails production systems going down. They are especially tricky to test appropriately because issues usually arise only with big enough dataset and real traffic on the production database. It’s challenging to simulate a realistic usage load in the test or staging environments.

I was planning to describe a couple of risky database migration scenarios and how to prevent them. While doing the research, I’ve realized that the readme of strong_migrations gem does an excellent job of explaining those problems and how to mitigate them. Instead of duplicating the info, I’ll leave the link here for reference.

Exhausting the database connections pool

Balancing the number of web servers and background worker threads with database pool and max connections is not straightforward. Getting the config wrong can result in downtime because threads will not be able to obtain a database connection and will raise the ActiveRecord::ConnectionTimeoutError.

Let’s start be checking out where those different values can be configured:

config/puma.rb

threads_count = ENV.fetch('RAILS_MAX_THREADS') { 3 }.to_i

threads threads_count, threads_count

port ENV.fetch("PORT") { 3000 }

environment ENV.fetch("RAILS_ENV") { "production" }

workers ENV.fetch('WEB_CONCURRENCY') { 2 }.to_i

preload_app!

on_worker_boot do

ActiveRecord::Base.establish_connection if defined?(ActiveRecord)

end

plugin :tmp_restartconfig/sidekiq.yml

---

:concurrency: 4

:queues:

- urgent

- defaultconfig/database.yml

default: &default

adapter: postgresql

encoding: unicode

pool: <%= ENV.fetch("RAILS_MAX_THREADS") { 5 } %>Setting the max_connections config depends on your PostgreSQL deployment. To check the current value, you have to run this SQL:

show max_connections;Now for the magic formula:

To avoid pool exhaustion, you must always use a less or equal number of threads from the Ruby single process than the database pool configured in config/database.yml file. Additionally, the number of threads accessing your database across all the processes must be smaller than the value configured in the max_connections PostgreSQL settings.

Remember that you cannot set max_connection to any arbitrary value. Multiple concurrent connections will increase a load on a database, so its specs must be adjusted accordingly. PGTune can help you with that.

Summary

I hope that reading this blog post will reduce the number of “Production is down” alerts you’ll see. Let me know in the comments below if you know some more interesting ways to kill your production servers.