ELK Elastic stack is a popular open-source solution for analyzing weblogs. In this tutorial, I describe how to setup Elasticsearch, Logstash and Kibana on a barebones VPS to analyze NGINX access logs. I don’t dwell on details but instead focus on things you need to get up and running with ELK-powered log analysis quickly.

Comparing to other tools available ELK gives you extreme flexibility in terms of ways to analyze and present your logs data. Hosted solutions are a bit pricey with monthly costs starting around $50 for a reasonable features set. By following this tutorial you can setup your own log analysis machine for a cost of a simple VPS server. No need to be a dev-ops pro to do it yourself.

ELK stack will reside on a server separate from your application. NGINX logs will be sent to it via an SSL protected connection using Filebeat. We will also setup GeoIP data and Let’s Encrypt certificate for Kibana dashboard access.

This step by step tutorial covers the newest at the time of writing version 7.7.0 of the ELK stack components on Ubuntu 18.04. Check out the release notes for the current ELK version and potential breaking changes.

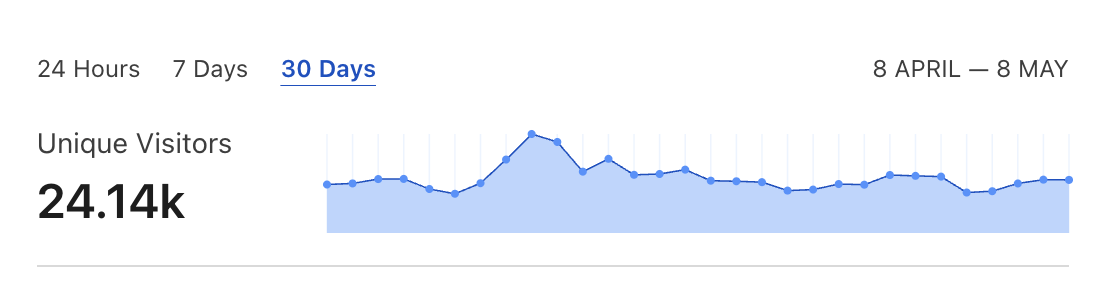

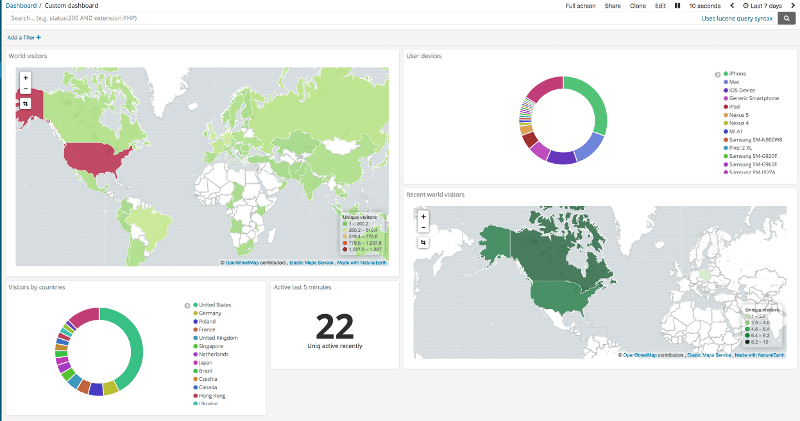

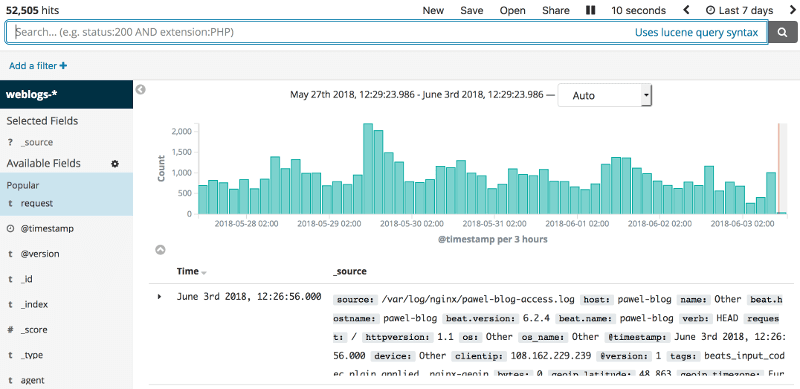

Just to show you a sneak peak of what we will be building:

Currently, I am using Kibana to analyze traffic logs of this blog and Abot for Slack landing page.

Let’s get started.

Setup VPS

You need to start with purchasing a barebones VPS and adding SSH access to it. I don’t elaborate on how to do it in this tutorial.

You will also need a domain or a subdomain you will config with your VPS server IP using an A DNS entry. If you use Cloudflare for your DNS remember not to use their CDN for this domain because it changes IP domain resolves to and can cause trouble with setup.

For my ELK stack server, I use a 4GB Hetzner Cloud VPS with Ubuntu 18.04. It is running Elasticsearch, Kibana and Logstash processes. With my current amount of traffic log data 4GB RAM is enough so far.

Install ELK dependencies

Access your VPS and run the following commands as a sudo user to install required dependencies:

Java

Java is required for both Elasticsearch and Logstash.

sudo apt install default-jdkYou can verify that installation was successful by typing:

java -versionResult should look similar to:

OpenJDK Runtime Environment (build 11.0.7+10-post-Ubuntu-2ubuntu218.04)

OpenJDK 64-Bit Server VM (build 11.0.7+10-post-Ubuntu-2ubuntu218.04, mixed mode, sharing)Elasticsearch

Elasticsearch is a database where logs are stored after Logstash processes them. It can be quite memory hungry so make sure to monitor your RAM usage when working with it on a low-end VPS.

Let’s install it by running:

wget -qO - https://artifacts.elastic.co/GPG-KEY-elasticsearch | sudo apt-key add -

sudo apt-get install apt-transport-https

echo "deb https://artifacts.elastic.co/packages/7.x/apt stable main" | sudo tee -a /etc/apt/sources.list.d/elastic-7.x.list

sudo apt-get update

sudo apt-get install elasticsearchNow uncomment the following lines in /etc/elasticsearch/elasticsearch.yml

http.port: 9200

network.host: localhoststart the Elasticsearch process:

sudo service elasticsearch startand verify that it is running by making a cURL request:

curl -v http://localhost:9200JSON response should look something like:

{

"name" : "elk-1",

"cluster_name" : "elasticsearch",

"cluster_uuid" : "6HppkNKvTT6glu6oswimXQ",

"version" : {

"number" : "7.7.0",

"build_flavor" : "default",

"build_type" : "deb",

"build_hash" : "81a1e9eda8e6183f5237786246f6dced26a10eaf",

"build_date" : "2020-05-12T02:01:37.602180Z",

"build_snapshot" : false,

"lucene_version" : "8.5.1",

"minimum_wire_compatibility_version" : "6.8.0",

"minimum_index_compatibility_version" : "6.0.0-beta1"

},

"tagline" : "You Know, for Search"

}Kibana

Kibana is a visual layer of an ELK stack. It queries an ElasticSearch for log data and offers a multitude of ways to analyze and present it.

First, let’s install it:

sudo apt-get install kibanaYou can check out the contents of /etc/kibana/kibana.yml to see the default configuration, but you don’t need to edit anything.

Now you can start a Kibana process by typing:

sudo service kibana startJust like in the case of Elasticsearch you can verify that it is running by using a cURL command:

curl -v http://localhost:5601Now let’s expose an access to our Kibana dashboard to an external world using NGINX.

NGINX

This is not the NGINX we will be analyzing logs from. This one will be used to provide password-protected access to Kibana instance running on our ELK server. We will use a Let’s Encrypt SSL certificate for secure access. We can do it by typing:

sudo apt-get update

sudo apt-get install nginx apache2-utils

sudo apt-get install python-certbot-nginx

sudo certbot --nginx -d my-elk-stack-vps.comTo automatically renew your certificate add this line to /etc/crontab file:

@monthly root certbot -q renewNow you need to set a password for your Kibana HTTP basic auth user:

sudo htpasswd -c /etc/nginx/htpasswd.users kibanaadminNext, configure NGINX to use generated certificates and proxy pass traffic from your VPS root path to Kibana:

/etc/nginx/sites-enabled/default

server {

listen [::]:443 ssl ipv6only=on; # managed by Certbot

listen 443 ssl; # managed by Certbot

ssl_certificate /etc/letsencrypt/live/my-elk-stack-vps.com/fullchain.pem; # managed by Certbot

ssl_certificate_key /etc/letsencrypt/live/my-elk-stack-vps.com/privkey.pem; # managed by Certbot

include /etc/letsencrypt/options-ssl-nginx.conf; # managed by Certbot

ssl_dhparam /etc/letsencrypt/ssl-dhparams.pem; # managed by Certbot

auth_basic "Restricted Access";

auth_basic_user_file /etc/nginx/htpasswd.users;

location / {

proxy_pass http://localhost:5601;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection 'upgrade';

proxy_set_header Host $host;

proxy_cache_bypass $http_upgrade;

}

}

server {

if ($host = my-elk-stack-vps.com) {

return 301 https://$host$request_uri;

} # managed by Certbot

listen 80 ;

listen [::]:80 ;

server_name my-elk-stack-vps.com;

return 404; # managed by Certbot

}Make sure that your nginx config is correct and restart the server:

nginx -t

service nginx restartThis config assumes that there is an A DNS entry for my-elk-stack-vps.com domain pointing to your VPS server IP.

You should now be able to see your Kibana dashboard by going to my-elk-stack-vps.com and entering your credentials.

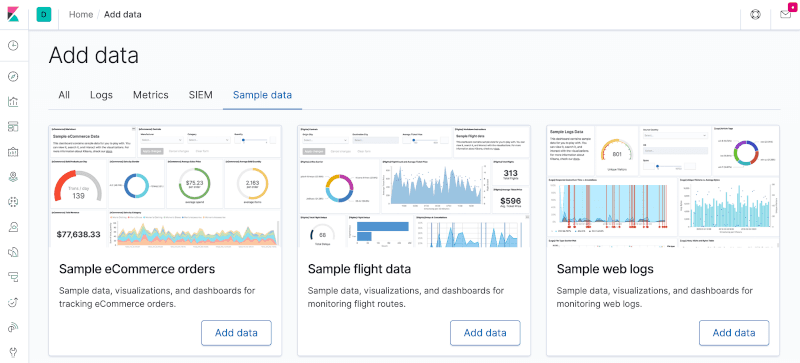

You can add sample dataset to play around with it or continue the tutorial to import your own log entries.

Logstash and SSL certificates

Logstash is used to accept logs data sent from your client application by Filebeat then transform and feed them into an Elasticsearch database.

Install it by running:

sudo apt-get install logstashConfigure SSL certificates

Because you will be sending your logs from a separate server, you should do it via a secure connection. Generating self-signed certificates will be necessary to do it:

sudo mkdir -p /etc/elk-certs

cd /etc/elk-certs

sudo openssl req -subj '/CN=my-elk-stack-vps.com/' -x509 -days 3650 -batch -nodes -newkey rsa:2048 -keyout elk-ssl.key -out elk-ssl.crt

chown logstash elk-ssl.crt

chown logstash elk-ssl.keyRemember to substitute my-elk-stack-vps.com with your domain name in the command generating a self-signed certificate. Later we will have to copy resulting files to your client-server Filebeat configuration.

Configure Logstash

Now you need to configure Logstash with the following files:

/etc/logstash/logstash.yml

path.data: /var/lib/logstash

path.config: /etc/logstash/conf.d

path.logs: /var/log/logstash/etc/logstash/conf.d/logstash-nginx-es.conf

input {

beats {

port => 5400

ssl => true

ssl_certificate_authorities => ["/etc/elk-certs/elk-ssl.crt"]

ssl_certificate => "/etc/elk-certs/elk-ssl.crt"

ssl_key => "/etc/elk-certs/elk-ssl.key"

ssl_verify_mode => "force_peer"

}

}

filter {

grok {

match => [ "message" , "%{COMBINEDAPACHELOG}+%{GREEDYDATA:extra_fields}"]

overwrite => [ "message" ]

}

mutate {

convert => ["response", "integer"]

convert => ["bytes", "integer"]

convert => ["responsetime", "float"]

}

geoip {

source => "clientip"

add_tag => [ "nginx-geoip" ]

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

remove_field => [ "timestamp" ]

}

useragent {

source => "agent"

}

}

output {

elasticsearch {

hosts => ["localhost:9200"]

index => "weblogs-%{+YYYY.MM.dd}"

document_type => "nginx_logs"

}

stdout { codec => rubydebug }

}This config specifies input and output for out logs and how they will be formatted before sending them to Elasticsearch. GeoIP data is configured here as well. It also enforces a secure SSL connection signed by a correct certificate for logs sent by a Filebeat.

Now let’s start Logstash process and verify that it is listening on a correct port:

systemctl enable logstash

sudo systemctl restart logstash.service

netstat -tulpn | grep 5400Output of the last command should be similar to:

tcp6 0 0 :::5400 :::* LISTEN 21329/javaIf it does not work, you can check out the troubleshooting guide at the end of the post.

Filebeat

Fielbeat is the only part of the infrastructure that needs to be installed on a client server. You should login to the server of your NGINX application and copy the self-signed SSL certificate files to the correct folder:

/etc/elk-certs/elk-ssl.crt

/etc/elk-certs/elk-ssl.keyYou can use SCP to do it or just copy/paste the contents of files.

Now, install Java using the same commands as for the main ELK host server.

sudo apt install default-jdk

java --versionThen install rest of the dependencies:

wget -qO - https://artifacts.elastic.co/GPG-KEY-elasticsearch | sudo apt-key add -

echo "deb https://artifacts.elastic.co/packages/7.x/apt stable main" | sudo tee -a /etc/apt/sources.list.d/elastic-7.x.list

sudo apt-get update

sudo apt-get install filebeatNow configure Filebeat by modifying this file: /etc/filebeat/filebeat.yml

filebeat.inputs:

- type: log

paths:

- /var/log/nginx/*.log

exclude_files: ['\.gz$']

output.logstash:

hosts: ["my-elk-stack-vps.com:5400"]

ssl.certificate_authorities: ["/etc/elk-certs/elk-ssl.crt"]

ssl.certificate: "/etc/elk-certs/elk-ssl.crt"

ssl.key: "/etc/elk-certs/elk-ssl.key"This config tells Filebeat where to send our logs and which SSL certificates to use for authentication. paths option points to a default NGINX logs folder.

If you have an NGINX running for a while, you probably have a bunch of GZipped logs in /var/log/nginx/. To send them to Kibana you should unzip them using gunzip and change their resulting filenames to match the *.log wildcard expression.

You might also consider disabling logging access to static resources to limit the noise. You can do it with the following NGINX config:

location ~* \.(?:ico|css|js|gif|jpe?g|png|woff2)$ {

root /home/ubuntu/www;

add_header Cache-Control "public, max-age=1382400, immutable";

access_log off;

try_files $uri =404;

}Raw logs are here

If everything went fine you should go to Kibana dashboard and create an index pattern called weblogs-*. You can do it in a Management menu tab. Now you can go to Discover and see your raw logs data there:

This how a raw JSON entry stored in Elasticsearch for a single NGINX log event after being parsed by Logstash looks like:

{

"_index": "weblogs-2018.06.01",

"_type": "nginx_logs",

"_id": "UQX6wGMBnPkSpi68QAq6",

"_version": 1,

"_score": null,

"_source": {

"@timestamp": "2018-06-01T15:25:47.000Z",

"source": "/var/log/nginx/pawel-blog-access-1.log",

"input_type": "log",

"os_name": "Ubuntu",

"request": "/profitable-slack-bot-rails",

"agent": "\"Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:61.0) Gecko/20100101 Firefox/61.0\"",

"message": "157.234.132.47 - - [01/Jun/2018:17:25:47 +0200] \"GET /profitable-slack-bot-rails HTTP/1.1\" 200 12104 \"https://news.ycombinator.com/\" \"Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:61.0) Gecko/20100101 Firefox/61.0\"",

"device": "Other",

"clientip": "157.234.132.47",

"response": 200,

"type": "log",

"httpversion": "1.1",

"host": "pawel-blog",

"referrer": "\"https://news.ycombinator.com/\"",

"build": "",

"tags": [

"beats_input_codec_plain_applied",

"nginx-geoip"

],

"beat": {

"hostname": "pawel-blog",

"version": "6.2.4",

"name": "pawel-blog"

},

"os": "Ubuntu",

"@version": "1",

"offset": 571753,

"geoip": {

"latitude": -26.2309,

"longitude": 28.0583,

"country_code3": "ZA",

"timezone": "Africa/Johannesburg",

"region_name": "Gauteng",

"ip": "157.234.132.47",

"postal_code": "2000",

"continent_code": "AF",

"city_name": "Johannesburg",

"country_name": "South Africa",

"region_code": "GT",

"country_code2": "ZA",

"location": {

"lon": 28.0583,

"lat": -26.2309

}

},

"ident": "-",

"verb": "GET",

"name": "Firefox",

"minor": "0",

"bytes": 12104,

"major": "61",

"auth": "-"

},

"fields": {

"@timestamp": [

"2018-06-01T15:25:47.000Z"

]

}

}Troubleshooting

As you can see you need to make various components play together in order to get the ELK stack running. Here’s a list of commands which can help you debug when things go wrong:

Filebeat logs:

tail -f /var/log/filebeat/filebeatLogstash logs:

tail -f /var/log/logstash/logstash-plain.logStart a Filebeat process in the foreground to see if it can connect to Logstash on the host ELK server:

/usr/share/filebeat/bin/filebeat -c /etc/filebeat/filebeat.yml -e -vStart a Logstash process in the foreground to check why it’s not listening on a port:

/usr/share/logstash/bin/logstash --debugSummary

I am just gettings started to play with ELK Elastic stack and discovering options it has to offer. I hope that this tutorial will help you get up and running with it quickly even if you don’t have much dev ops experience up your sleeve.